There’s something deeply unsettling about watching a robot beat you at your own game. Not just any robot one that can read spin, predict trajectories, and fire back returns faster than you can blink. Welcome to the world of Ace, Sony’s table tennis robot that just made history by defeating elite human players in competitive matches.

This isn’t your grandfather’s ping-pong ball launcher. This is something fundamentally different, and it might just change everything we thought we knew about the limits of physical AI.

The Match That Changed Everything

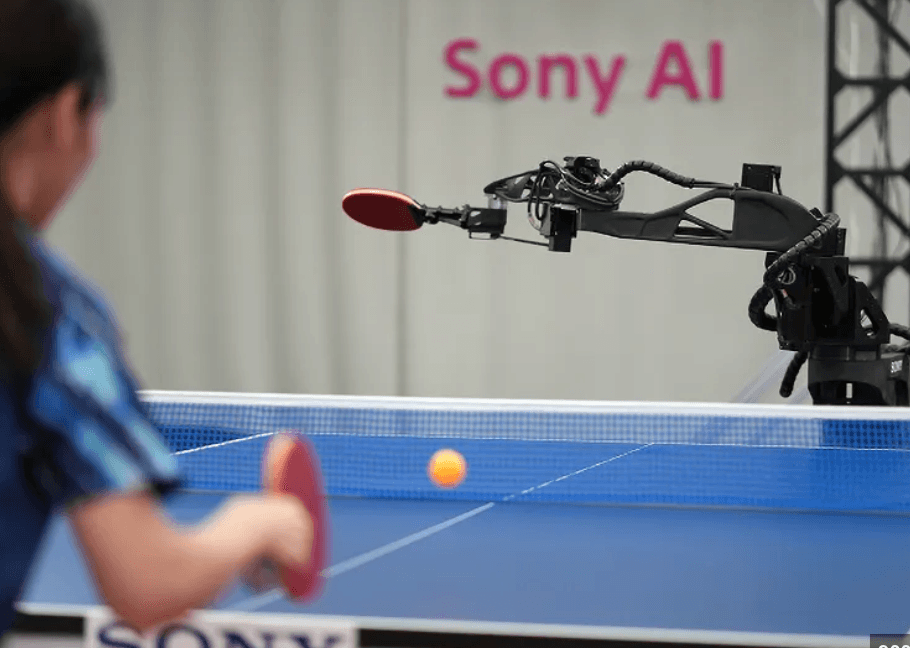

Picture this: December 2025, Tokyo. A professional table tennis player named Mayuka Taira steps up to the table. On the other side isn’t another human it’s a sleek robotic arm, surrounded by cameras like a mechanical spider’s eyes, gripping a paddle with disturbing precision.

The ball goes back and forth. Once. Twice. Ten times. Taira, who made it to the finals of the 2019 US Open Table Tennis Championships, is fighting for every point. And she’s losing.

When the match ended, Taira’s assessment was chilling in its simplicity: “It is very hard to predict, and it shows no emotion. Because you can’t read its reactions, it’s impossible to sense what kind of shots it dislikes or struggles with.”

She had just been defeated by a machine that learned to play table tennis by playing against itself in simulation a machine that had never seen her before and knew nothing about her playing style until the moment she served.

Why This Actually Matters (And It’s Not About Ping-Pong)

Before you dismiss this as another tech company’s expensive hobby, let’s talk about what just happened here.

For decades, AI has been crushing humans in games. Deep Blue beat Garry Kasparov at chess in 1997. AlphaGo defeated Lee Sedol at Go in 2016. These were monumental achievements, but they shared one crucial limitation: they all happened in perfectly controlled digital environments.

Chess pieces don’t spin at 9,000 revolutions per minute. Go stones don’t come hurtling toward you at 45 miles per hour while you have 20 milliseconds to decide what to do about it.

Table tennis is different. It’s messy. It’s physical. It requires the kind of split-second sensing and reaction that, until now, only biology could pull off reliably.

The ball’s trajectory changes based on spin that a human player imparts through subtle wrist movements that vary every single time. The opponent adapts their strategy in real-time based on your weaknesses. There’s unpredictability, adversarial interaction, and physical objects moving through space near the very edge of what’s mechanically possible.

Sony’s Ace just crossed that threshold. And that’s why researchers are publishing this in Nature, one of the world’s most prestigious scientific journals, rather than just posting a cool YouTube video.

The Tech That Makes It Possible

Let’s get into the guts of how this actually works, because it’s genuinely fascinating.

The Eyes

Ace doesn’t have two eyes like you do. It has twelve sensors creating a vision system that would make a fighter pilot jealous.

Nine high-speed cameras track the ball’s 3D position in space, operating at 200 frames per second. But here’s where it gets interesting: three additional event-based vision sensors detect changes at the pixel level with sub-millisecond precision.

These event sensors don’t capture frames like a normal camera. Instead, they only register when something changes specifically, when the ball moves. This is crucial because it means Ace can detect motion that would just be a blur to human eyes or conventional cameras.

The system can track that tiny 40mm ball traveling at speeds exceeding 20 meters per second (that’s about 45 mph), while simultaneously measuring its spin rate, which can exceed 9,000 RPM in professional play.

Total perception latency? 10.2 milliseconds.

To put that in perspective, elite human players have a reaction time of about 230 milliseconds. Ace is perceiving the world more than 20 times faster than the humans it’s playing against.

The Brain

Here’s where things get really interesting. Sony didn’t program Ace with table tennis knowledge. They didn’t show it thousands of hours of championship matches. They couldn’t, because table tennis is too complex to code manually.

Instead, they used deep reinforcement learning essentially, the robot taught itself by playing millions of practice rallies in simulation.

Think about how you learned to play ping-pong. You probably started by just trying to hit the ball back, failed a bunch of times, gradually developed a feel for the timing and angles, and eventually built up muscle memory and strategic thinking.

Ace did basically the same thing, but compressed into computational time. It learned through trial and error what works and what doesn’t, developing strategies that sometimes look eerily inhuman because they weren’t derived from watching humans play.

One player, Rui Takenaka, discovered a fascinating quirk: “When I used a serve with complex spin, Ace also returned the ball with complex spin, which made it difficult for me. But when I used a simple serve what we call a knuckle serve Ace returned a simpler ball.”

The robot was mirroring complexity because that’s what worked in simulation. It wasn’t trying to be tricky; it had just learned that matching complexity with complexity was effective strategy.

The Body

The robotic arm itself is a custom-built piece of engineering optimized specifically for table tennis. It has eight joints two prismatic (sliding) and six revolute (rotating) which researchers determined was the bare minimum needed to execute the full range of competitive shots.

Three joints control where the paddle goes in space. Two control its angle. Three control how hard and fast the ball gets hit.

The whole system is tuned to handle impacts at speeds up to 19.6 meters per second, with enough precision to return balls landing within millimeters of the table edge shots that would be winners against most human players.

Total end-to-end latency from seeing the ball to executing the return? 20.2 milliseconds.

For context, that’s faster than most people can press a button after seeing a light turn on.

The Scorecard: How Did It Actually Perform?

Let’s be clear about the results, because the headlines can be misleading.

Sony tested Ace against seven human players in official matches following International Table Tennis Federation rules:

Against five elite amateur players (people who practice 20+ hours per week and have been playing for over a decade): Ace won 7 out of 13 games, securing three match victories out of five.

Against two professional Japanese league players (Minami Ando and Kakeru Sone): Ace won 1 game out of 7 and lost both matches.

But then, in March 2026, something changed. Ace won against Miyuu Kihara a top 25 player in the World Table Tennis rankings for women’s singles.

So where does this actually put Ace on the skill spectrum? Somewhere in the “elite amateur to low professional” range. Good enough to beat players who’ve dedicated years to the sport, but not yet ready to challenge world champions.

Peter Stone, Chief Scientist at Sony AI, was refreshingly honest about this: “Some people are still better than this system. It was still not quite hitting the ball as hard or with as much spin as people.”

What It Feels Like to Play Against

The human players’ descriptions of facing Ace are genuinely illuminating.

Professional player Mayuka Taira emphasized the psychological challenge: The robot has no tells, no emotions, no patterns based on frustration or fatigue. When you’re playing a human, you’re constantly reading micro-expressions, body language, and emotional state. Against Ace, there’s nothing to read.

Kinjiro Nakamura, who competed in the 1992 Barcelona Olympics, watched Ace execute a particular shot and said something remarkable: “No one else would have been able to do that. I didn’t think it was possible.”

But then he added something even more interesting: “It means that there is a possibility that a human could do it too.”

The robot, unconstrained by assumptions about what’s possible, was showing human experts new possibilities in their own sport.

The Five-Year Journey Nobody Talks About

What the headlines don’t capture is the grinding, incremental progress that made this possible.

“It started with juggling the ball,” said Michael Spranger, President of Sony AI. “Then, cooperative rallies where a human works with the robot to keep the rally going. From there we moved to playing against increasingly stronger table tennis players.”

In the early days, players wore helmets, pads, and protective glasses. The robot was too unpredictable, too potentially dangerous. As the system improved and safety certification came through, the protective gear gradually came off.

This is a detail that sounds minor but represents something profound: creating robots that can safely interact with humans at super-human speeds is incredibly difficult. One bad algorithm, one sensor glitch, and a ball (or worse, a paddle) could be flying at someone’s face at 45 mph.

The fact that Ace can now play matches with professional athletes without any safety gear is itself a major achievement in physical AI.

Why Table Tennis Was the Perfect Test

Sony didn’t choose table tennis randomly. It’s actually one of the most demanding tests you could design for physical robotics.

Speed: The ball moves faster than almost any other sport at this scale. Balls regularly exceed 100 km/h in competitive play.

Precision: The table is small. Margins of error are measured in centimeters. A shot needs to clear a 15.25cm high net and land on a surface that’s only 274cm long and 152.5cm wide.

Spin: This is the killer. A ball can be spinning at thousands of RPM in multiple axes, completely changing how it bounces and flies. Detecting and responding to spin requires visual processing that most robotic systems simply can’t handle.

Adversarial interaction: Your opponent is actively trying to make you fail. They’re adapting to your weaknesses in real-time. You need to adapt right back or you lose.

Real-time decision making: You can’t pause the game to think. Every decision happens in milliseconds or it’s too late.

If you can build a robot that handles all of this, you’ve solved problems that have applications far beyond sports.

The Implications Nobody’s Talking About Yet

Here’s where things get really interesting and potentially uncomfortable.

Manufacturing and Assembly

Imagine factory robots with Ace’s perception and reaction speed. We’re not talking about pre-programmed motions on an assembly line. We’re talking about robots that can handle unexpected variations, adapt to irregular objects, and work alongside humans safely at super-human speeds.

Current industrial robots are largely kept in cages precisely because they can’t reliably react to unexpected human presence. A system with Ace’s sensing and control capabilities changes that equation entirely.

Healthcare and Surgery

The same perception and precision that lets Ace return a spinning ball could enable surgical robots with unprecedented dexterity. Imagine robotic systems that can track and react to tissue movement during beating-heart surgery, or assist in microsurgery where human hand tremor is a limiting factor.

Autonomous Vehicles

The challenges of table tennis tracking fast-moving objects, predicting trajectories, making split-second decisions in the presence of obstacles are remarkably similar to the challenges of autonomous driving. Ace’s perception system processes information and makes decisions faster than any current self-driving car.

Search and Rescue

Robots that can navigate unpredictable environments, react to sudden changes, and interact safely with humans at high speed could transform disaster response and rescue operations.

The Defense Application Nobody Wants to Say Out Loud

Let’s address the elephant in the room. A robotic system that can track small, fast-moving objects and intercept them with millimeter precision and 20-millisecond reaction times has obvious military applications.

Sony AI researchers emphasized throughout their work that Ace was designed with strict safety protocols and adheres to official sports rules. But the technology itself is neutral it can be applied in ways its creators may not have intended.

This is the perpetual dual-use dilemma in robotics research. Every advancement in capability can cut both ways.

The Things Ace Still Can’t Do

It’s important to be clear about the current limitations, because they tell us what problems remain unsolved.

Power and spin: Ace isn’t hitting with the same force or spin that top professionals generate. The hardware is optimized for speed and precision, but raw power is still lacking.

Strategic depth: Against world-class players, Ace’s tactical repertoire isn’t sophisticated enough. It can execute individual shots brilliantly, but multi-shot strategic sequences are still a weakness.

Adaptation speed: Humans can sometimes figure out the robot’s patterns within a match and exploit them. Ace does adapt, but not always fast enough.

Serve diversity: The robot’s serving capabilities are still somewhat limited compared to professionals who can execute dozens of different service variations.

Peter Dürr, the project lead, was candid about this: “This isn’t perfection yet. There’s still room for improvement in the hardware and in the strategy.”

What Makes This Different from Previous Robots

This isn’t the first table tennis robot ever built. The first “robot ping-pong” competition was held in 1983 over forty years ago.

But none of those previous systems could compete with elite players under official rules. They were confined to controlled conditions, simple rallies, or heavily constrained scenarios.

What makes Ace different is the integration: high-speed event-based vision combined with reinforcement learning combined with precision hardware, all working together in real-time under match conditions.

John Billingsley, who pioneered early robot ping-pong research, acknowledged the achievement while noting the massive technological advantage: “They have gone at the task mob-handed, and used sledgehammer techniques.”

His point is valid Ace uses nine cameras and specialized sensors that give it perception abilities no human could match. It’s not exactly a fair fight.

But that’s also missing the point. The goal wasn’t to create a robot with human-like limitations. The goal was to create a robot that could handle the challenges of human-level physical interaction. On that measure, Ace succeeded.

The Gran Turismo Connection

Here’s a detail that context-dependent nerds will appreciate: Ace builds on technology Sony AI developed for Gran Turismo Sophy, their superhuman racing AI.

GT Sophy mastered high-speed racing strategy in simulation learning to navigate complex tracks, make split-second passing decisions, and even demonstrate “sportsmanship” in its racing behavior.

Ace takes those same learning principles and brings them into the physical world. Where GT Sophy dealt with virtual race cars, Ace deals with real spinning balls in three-dimensional space.

This progression from mastering virtual environments to mastering physical ones represents a significant evolution in AI capability.

The Human Element

Despite all the technical sophistication, one of the most touching aspects of the project was the human collaboration required to make it work.

The research team included not just AI researchers and roboticists, but professional table tennis players, coaches, past Olympians, and sport experts. These athletes weren’t just test subjects they were integral to system evaluation and match design.

The players’ insights into strategy, shot difficulty, and competitive scenarios shaped how Ace was trained and evaluated. This is human expertise guiding machine learning in a very literal sense.

When Kinjiro Nakamura (Barcelona Olympics, 1992) said that seeing Ace’s shots showed him that things he thought impossible were actually achievable by humans, that’s a beautiful inversion of the usual narrative. Instead of the robot making human achievement obsolete, it’s expanding the boundaries of what humans think they can accomplish.

What Happens Next?

Sony AI is clear that this is a research project, not a product. You’re not going to walk into a sporting goods store and buy an Ace system to practice against.

But the underlying technologies the event-based vision, the reinforcement learning approaches, the high-speed control systems those are all transferable to other applications.

Peter Stone framed it perfectly: “It represents a landmark moment in AI research… Once AI can operate at an expert human level under these conditions, it opens the door to an entirely new class of real-world applications that were previously out of reach.”

The question isn’t whether Ace will become a commercial table tennis product. The question is: what other problems become solvable once you have robots that can see, decide, and act at this speed in unpredictable real-world environments?

The Bigger Picture: What This Tells Us About AI

There’s a pattern in AI development that Ace exemplifies perfectly.

We’ve had AI that can beat humans at chess (symbolic reasoning), Jeopardy (language and knowledge), Go (pattern recognition and strategy), and racing simulations (high-speed decision making).

Each of these represented a different facet of intelligence. But they all shared one thing: they were fundamentally about information processing in controlled environments.

Physical AI robots that can perceive, reason, and act in real-time in the physical world represents a different frontier entirely. It’s where computation meets physics, where algorithms meet inertia and friction and unpredictable real-world messiness.

Ace demonstrates that this frontier is now crossable. The technology exists to create robots that can handle complex, adversarial, real-time physical tasks at or beyond human expert levels.

That’s not just incremental progress. That’s a qualitative shift in what’s possible.

The Uncomfortable Questions

Let’s end with some questions that this achievement raises but doesn’t answer:

If robots can outperform humans in physical tasks requiring split-second decisions, what happens to jobs that rely on those capabilities? Not just athletes think about drivers, certain types of surgery, physical security, and more.

How do we ensure these capabilities are used responsibly? The same technology that makes an impressive table tennis robot could, in different hands, have very different applications.

What’s the endpoint here? If Ace is at “elite amateur to low professional” level now, and the researchers are clear there’s room for improvement, how long until no human can beat these systems at physical tasks?

Does it matter? Should we care if robots can outperform humans at sports? Is there something valuable in preserving human excellence in physical domains, or is this just nostalgia for limitations we’re outgrowing?

These aren’t rhetorical questions. They’re genuine challenges that we’re going to need to grapple with as physical AI capabilities continue advancing.

The Bottom Line

A robot just beat professional athletes at a sport requiring human-level reflexes, strategy, and precision. Not in a controlled lab demo. Not with heavily constrained rules. In real matches, under official regulations, against people who’ve devoted their lives to mastering this skill.

That’s remarkable. But what’s even more remarkable is what it represents: we’ve reached a point where AI systems can operate in messy, unpredictable, real-world physical environments at speeds that match or exceed human capabilities.

The implications ripple out far beyond the ping-pong table in Tokyo where Ace made history. We’re looking at a future where robots can work alongside (or instead of) humans in tasks that currently require human-level perception, decision-making, and physical execution.

Whether that excites you or worries you probably depends on your perspective. But either way, it’s happening.

The robot isn’t just returning serves anymore. It’s returning our assumptions about what’s possible, what’s coming, and what role humans will play in an increasingly automated world.

Game, set, match? We’re still in the early sets of a much longer match. But Ace just served notice that the robots are no longer practicing. They’re competing. And in some cases, they’re winning.

Leave a Reply