You know that frustrating feeling when you’re trying to explain something complex to someone, but they just don’t have the right tools to execute what you’re describing? That’s essentially been the story of robotics AI for years. We’ve had increasingly smart “brains” powered by artificial intelligence, but they’ve been stuck controlling clunky, imprecise “hands” that can barely manage what a toddler does instinctively.

Genesis AI just announced they’re done with that compromise. And they’re not being subtle about it.

On May 6, 2026, the Paris and San Carlos-based robotics startup unveiled GENE-26.5 what they’re calling the first AI foundation model that gives robots genuinely human-level physical manipulation capabilities. But here’s the kicker: instead of just building better software to control existing robot hardware (which is what most AI labs do), Genesis built their own robotic hands from scratch. Human-scale. Five-fingered. Designed to move exactly like yours do.

This isn’t just another incremental improvement in robotics. This is a company saying “we’re tired of the limitations,” then spending $105 million in seed funding to rebuild the entire stack from the ground up.

Why “Full-Stack” Actually Matters Here

When tech companies throw around the term “full-stack,” they’re usually talking about software engineers who can work on both front-end and back-end code. Impressive, sure, but not exactly revolutionary.

Genesis AI using “full-stack” means something entirely different and far more ambitious. They’re controlling:

- The AI brain (the foundation model that processes information and makes decisions)

- The physical hardware (the actual robotic hands)

- The data collection system (a sensor-equipped glove that humans wear)

- The simulation environment (a virtual world where AI trains AI)

Why go through all that trouble? Because in robotics, the connection between intelligence and execution is everything. It’s like trying to become a concert pianist by practicing on a keyboard that’s missing half its keys and has the wrong spacing. You might develop some skills, but you’ll never truly master the instrument.

CEO Zhou Xian explained the pivot to TechCrunch: “The model has always been the goal, because a better model means better intelligence. But the company soon realized that it needed control over the hardware. So we decided to go full stack.”

The Demos That Make Engineers Stop Scrolling

Genesis AI released a demonstration video alongside their announcement, and honestly, it’s the kind of thing that makes robotics researchers pause their coffee midway to the mouth. We’re talking about tasks that have traditionally been used as examples of what robots can’t do:

Cooking a complete 20-step meal. Not microwaving a frozen dinner. Actually chopping tomatoes with a knife, cracking an egg with one hand (try that yourself it’s harder than it looks), coordinating two hands to pour and blend a smoothie, and serving it mid-air without spilling.

Playing piano at a human performance level. Not pecking out “Chopsticks” one note at a time. Playing an ultra-fast, highly complex composition that requires coordinated finger movements, pressure sensitivity, and timing precision.

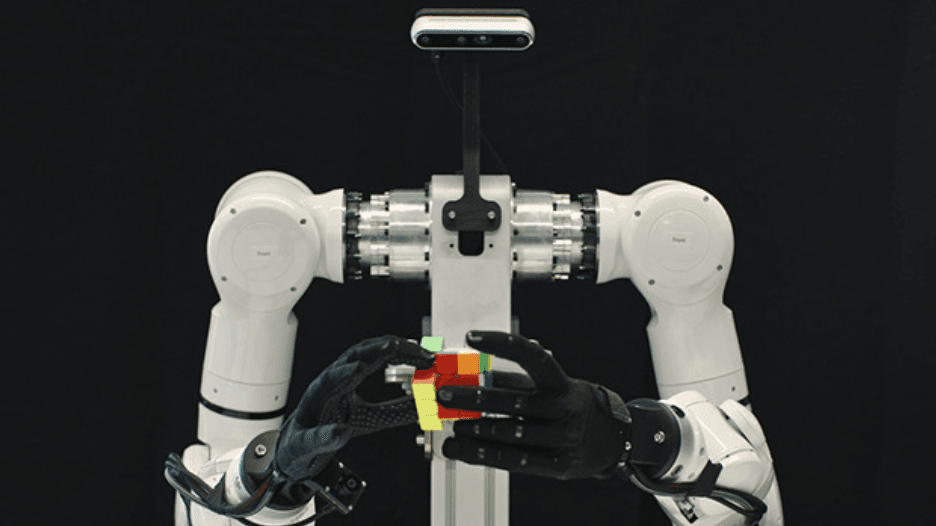

Solving a Rubik’s Cube while holding it in the air. This requires continuous manipulation, high-speed reasoning about the next moves, and precise wrist control all simultaneously.

Wire harnessing. If you’ve never heard of this, it’s one of those unglamorous industrial tasks that’s incredibly difficult for robots. You’re taking multiple wires, organizing them into bundles, and securing them requiring both fine motor control and spatial reasoning about how to route them efficiently.

Conducting high-precision lab experiments. Pipetting exact volumes of liquids, transferring them between containers without contamination, manipulating delicate glassware mid-air. This is the kind of work where a tiny mistake ruins an entire experiment.

Simultaneous multi-object grasping and sorting. Grabbing four objects of completely different sizes and shapes at the same time, then sorting them into the correct bins. That’s not just dexterity it’s planning, perception, and execution happening in real-time.

Now, let’s be real: these are controlled demos in a research facility. The lighting is perfect, the objects are exactly where the robot expects them, and we’re not seeing the failed attempts that surely happened during development. But even with those caveats, what’s being shown is genuinely several steps beyond what we’ve seen from commercial robotics systems.

The “Embodiment Gap” Problem Genesis Is Solving

Here’s a concept that doesn’t get enough attention outside robotics circles but is absolutely critical to understanding why Genesis’s approach matters: the embodiment gap.

Imagine trying to learn guitar by watching videos of someone playing piano, then attempting to translate what you learned onto a ukulele. The underlying concepts might transfer (music theory, rhythm, hand-eye coordination), but the actual physical execution? Good luck.

That’s the embodiment gap. It’s the difference between human form and robotic form, and it has absolutely crippled robots’ ability to learn from the vast amount of human demonstration data that exists in the world.

Think about it: there are millions of hours of video showing humans cooking, assembling electronics, conducting experiments, doing surgery, fixing cars basically every physical task humans do. That’s an enormous training dataset, if only you could use it. But traditional robots are so different from humans in their physical form that most of that data is nearly useless. A video of a chef dicing an onion doesn’t help much if your robot has two pincer grippers instead of five-fingered hands.

Genesis AI’s solution? Build robotic hands that match human hands so closely that the embodiment gap essentially disappears. Their hands are human-scale, with the same number of fingers, similar range of motion, and comparable grip patterns.

Co-founder and President Théophile Gervet (formerly a research scientist at Mistral AI) put it simply: “If we could design a robotic hand that tries to mimic a human hand as much as possible, we can instantly unlock huge amounts of human data without having to worry about what people call the ’embodiment gap’ in robotics research.”

Once you close that gap, suddenly all those YouTube cooking tutorials, all that egocentric video of people working in labs, all the footage of assembly line workers doing intricate manufacturing tasks it all becomes potential training data. You can teach a robot to cook by showing it thousands of humans cooking, because now your robot’s hands work like human hands.

The Data Collection Glove: Brilliant or Dystopian?

Here’s where things get clever and slightly uncomfortable.

Genesis AI didn’t just build robotic hands. They also built a matching data collection glove that humans can wear. This glove is loaded with sensors and creates what the company calls a “1:1:1 mapping” between the human hand, the glove, and the robotic hand.

When someone wears this glove and performs a task let’s say soldering circuit boards or pipetting chemicals in a lab the glove captures every movement, every adjustment, every subtle correction. That data then becomes training material for GENE-26.5, teaching the AI how to control the robotic hands to perform the same task.

The economics here are striking. Genesis claims this glove costs 100 times less than typical teleoperation hardware used in robotics research. They also say it collects data five times more efficiently than traditional methods. And unlike clunky robotic control rigs, the glove is light enough to wear during actual work it’s comparable to the safety gloves many workers already use.

Gervet explained the use case to TechCrunch: “For the first time, you can wear the data collection device when you’re doing your daily job, whether it’s a lab technician for pharma or for manufacturing.”

So imagine you’re a pharmaceutical technician. You wear this glove during your normal workday. Every complex procedure you perform preparing compounds, running assays, handling sterile equipment all of that expertise is being captured and converted into robot training data. Genesis AI gets to build what they’re calling “the world’s largest human skill library.”

But here’s where it gets ethically murky, and to their credit, the Genesis team isn’t pretending otherwise. Workers would essentially be training their own replacements. You’re wearing a glove that’s teaching a robot to do your job, possibly better than you can do it, potentially making your position obsolete.

When asked about this, Gervet acknowledged the tension: “We haven’t nailed the details yet.” The company says compensation and consent will be left to their customers and their employees to work out. They’re also exploring third-party data collection partners who would presumably be paid explicitly for the data.

It’s a thorny problem without easy answers. On one hand, automation has always displaced some workers while creating different opportunities. On the other hand, there’s something particularly direct about wearing a device that’s actively learning to replace you. Will workers consent? Will they receive royalties? Will unions get involved? These questions are coming, and they’re coming fast.

GENE-26.5: The Brain Behind the Hands

Let’s talk about the AI model itself. GENE-26.5 (named for May 2026) is what’s called a foundation model for robotics think of it like GPT for physical tasks rather than language.

Foundation models are AI systems trained on enormous datasets that develop general capabilities, which can then be fine-tuned for specific applications. GPT-4 learned patterns from vast amounts of text and can write, analyze, translate, and code. GENE-26.5 learned patterns from physical manipulation data and can cook, assemble, experiment, and perform delicate tasks.

But here’s what makes it particularly interesting: the model is designed to be cross-platform. It’s not locked to Genesis AI’s hardware. According to the company, GENE-26.5 can run different types of robots, including systems built by other manufacturers.

That’s a strategic play that goes beyond selling robots. Genesis seems to be positioning themselves not just as a hardware company, but as the intelligence layer that could power many different robotic systems. Think about how Nvidia’s CUDA software became essential to AI computing regardless of whose hardware you ultimately bought. Genesis might be aiming for something similar in robotics.

The model’s capabilities come from three data sources working together:

- Direct teleoperation data from the glove humans performing tasks while the system learns

- Egocentric video footage from head-mounted cameras capturing how humans interact with their environment from a first-person perspective

- Internet-scale video data that massive library of YouTube tutorials, instructional videos, and documentary footage showing humans doing physical tasks

By closing the embodiment gap with human-like hands, Genesis can extract useful training signal from all three sources more effectively than competitors using traditional robot forms.

The Simulation Advantage Nobody’s Talking About Enough

There’s a less flashy but potentially more important part of Genesis’s announcement: their proprietary simulation system.

Physical testing of robots is brutally slow and expensive. You set up the robot, run a test, it fails, you adjust the code, set it up again, run another test, it fails differently. Repeat thousands of times. Each test takes time, risks damaging expensive hardware, and requires human supervision.

Simulation lets you compress that timeline dramatically. You create a virtual environment that mimics real-world physics, lighting, and material properties. Then you run thousands of tests in parallel, virtually, learning from each failure without breaking anything physical. The catch has always been the “sim-to-real gap” virtual worlds never perfectly match reality, so robots trained in simulation often fail when deployed in the real world.

Genesis is claiming they’ve narrowed that gap significantly with what they call “hyper-realistic rendering and physics.” The more realistic the simulation, the more reliably skills learned virtually transfer to physical robots.

CEO Zhou Xian identified evaluation as the bottleneck: “The real bottleneck for the iteration speed of the model is evaluation. So this helps us speed up model training a lot.”

Here’s why this matters in practice: with a good simulation system, Genesis can test GENE-26.5 on thousands of variations of a task overnight. Different lighting conditions, different object placements, different hand starting positions, different disturbances. By the morning, they know what works and what doesn’t, all without touching a physical robot.

This creates what the company calls a “self-evolving cycle where AI trains the AI in a fully virtual environment.” The system runs simulations, identifies weaknesses, generates new training scenarios to address those weaknesses, trains on them, and repeats autonomously.

If that actually works as advertised (big if), it means Genesis can iterate on their model orders of magnitude faster than competitors relying primarily on physical testing. Fast iteration means more improvement per dollar spent and faster time to deployment.

The Competitive Landscape: Who’s Chasing Similar Goals?

Genesis AI isn’t operating in a vacuum. The race to build general-purpose robots with human-level dexterity is arguably the hottest area in robotics right now, and the funding reflects it.

Physical Intelligence raised a massive $400 million round and is working on similar problems foundation models for robotic manipulation. They’re taking a somewhat different approach, focusing on learning from large-scale interaction data rather than building custom hardware.

Skild AI is reportedly valued at $4 billion (yes, billion) and is building what they call “a general-purpose brain for robots.” They’re more focused on the software/AI side, working with existing robotic platforms.

Figure AI is building humanoid robots with backing from tech giants and is focused on creating general-purpose humanoid workers for industrial applications. They’ve demonstrated their robot performing warehouse tasks and are moving toward commercial deployment.

1X Technologies (formerly Halodi Robotics) is also in the humanoid space, with robots designed for security, logistics, and eventually home assistance.

What distinguishes Genesis? The full-stack approach. Most competitors are either:

- Building great AI but using off-the-shelf hardware, or

- Building impressive hardware but licensing AI from elsewhere, or

- Focusing on one specific form factor (like humanoids)

Genesis is simultaneously building the brain, the hands, the data collection tools, and the simulation environment. That’s more expensive and riskier, but if successful, it gives them a level of integration and optimization that’s hard to match.

Théophile Gervet explained the philosophy: “We believe winning in robotics requires excellence at every level. That’s why we’re obsessed with innovating across the full-stack, from AI to hardware. By controlling every layer, we can build a cohesive system and solve the problem holistically.”

The $105 Million Question: Can They Actually Deliver?

Genesis AI emerged from stealth in July 2025 with $105 million in seed funding matching the record French seed round previously held by Mistral AI (which, not coincidentally, is where co-founder Gervet came from).

The investor list reads like a who’s who of tech: Khosla Ventures, Eclipse, Bpifrance, HSG, former Google CEO Eric Schmidt, French telecom billionaire Xavier Niel, MIT robotics legend Daniela Rus, and AI researcher Vladlen Koltun.

That’s serious backing from serious people. Eric Schmidt specifically called this “a paradigm shift in robotics” and “an important milestone for the team and the robotics industry more broadly.” Vinod Khosla said Genesis is “changing the trajectory of robotics, bringing us closer to AI that can operate in the real world.”

But let’s inject some realism here. Demo videos filmed in controlled conditions are fundamentally different from robots operating reliably in messy, unpredictable real-world environments for extended periods.

Cooking that impressive 20-step meal in a pristine California research facility? That’s an achievement. Having that robot cook in a commercial kitchen during dinner rush, handling whatever ingredients are available, adapting when a customer changes their order, working around human chefs moving unpredictably, and doing this reliably day after day for years? That’s the actual challenge.

Similarly, that wire harnessing demo looks great. But can the system handle the vibrations, lighting variations, material inconsistencies, and ergonomic constraints of a real automotive assembly line? Can it do it fast enough to keep up with production speeds? Can it do it cheaply enough to make economic sense compared to human workers?

Those questions aren’t answered yet. The company has 60 employees across Paris, California, and London recently expanded to London specifically to tap into European talent. They’re hiring aggressively. They’re in talks with potential customers in France, Germany, and Italy.

But they haven’t shipped a product yet. They’ve announced they’ll “soon unveil” their first general-purpose robot a full-body system, not just the hands. What “soon” means in startup time is anyone’s guess.

What This Means for Industries Watching Closely

If Genesis can deliver even 70% of what they’re demonstrating, several industries should be paying very close attention:

Manufacturing and Electronics Assembly

Wire harnessing, precision assembly, quality inspection these are tasks humans currently do because robots haven’t been dexterous enough. If GENE-26.5 can handle this work reliably, it could transform electronics manufacturing, automotive assembly, and aerospace production.

The appeal isn’t just labor cost. It’s consistency, working 24/7, doing tasks in conditions unsafe for humans (extreme temperatures, toxic fumes), and handling pieces so small or delicate that human error rates are high.

Pharmaceutical and Biotechnology Labs

Lab work is notoriously tedious and error-prone. Pipetting exact volumes hundreds of times per day, preparing compound libraries, running assays, managing sterile techniques. These tasks require precision, repeatability, and documentation all things robots excel at. The challenge has been the dexterity and adaptability needed to handle diverse glassware, adjust to different protocols, and respond when something goes wrong.

If Genesis’s robots can operate in these environments, they could dramatically accelerate drug discovery and development while reducing human exposure to hazardous materials.

Food Service and Preparation

That cooking demo wasn’t just showboating. Food preparation combines nearly every difficult manipulation challenge: cutting (requires sharp tools and variable force), pouring (requires feedback and adjustment), combining ingredients (requires sequencing and timing), and plating (requires aesthetic judgment).

We’re probably not talking about robots replacing chefs at Michelin-starred restaurants anytime soon. But institutional food service, meal prep services, and fast-casual restaurants? That’s a realistic near-term target.

Healthcare and Medical Assistance

The demos showed delicate manipulation, precise movements, and adaptive control all critical for medical applications. Surgical assistance, patient mobilization, medication preparation and distribution, rehabilitation therapy. These are high-value applications where the combination of dexterity and reliability could genuinely improve outcomes.

The Open Questions That Will Define Success

As impressive as the announcement is, some major questions remain unanswered:

How much does it actually cost? They’ve raised $105 million, which is a lot for most businesses but not huge for full-stack robotics development. The glove is supposedly cheap, but what about the hands themselves? The full robot they’re planning to reveal? Can they hit price points that make commercial sense?

How reliable is it over time? Demo performances and sustained operation are completely different. How often does the system fail? How long does it take to recover from failures? What’s the maintenance requirement? These questions determine whether it’s a research curiosity or a commercial product.

How fast can it learn new tasks? They’re claiming the model generalizes, but how much fine-tuning is needed for each new application? If a customer wants to deploy this in their facility for a task Genesis hasn’t explicitly trained on, what’s that process like?

What about regulatory approval? For medical, pharmaceutical, and food handling applications, regulatory bodies will have a lot to say. How long will those approval processes take?

Can they scale manufacturing? Building a handful of prototypes in a lab is one thing. Manufacturing thousands of robotic hands with the necessary precision and reliability is a completely different challenge. They’ll need to figure out supply chains, quality control, assembly processes, and all the unglamorous but critical aspects of hardware manufacturing.

How will they handle the ethics of job displacement? That data glove issue isn’t going away. As deployment conversations get serious, they’ll need to have better answers than “we’ll let our customers figure it out.”

The Bigger Picture: Are We At an Inflection Point?

Step back for a moment from Genesis specifically and look at what’s happening across robotics as a whole.

We’re seeing massive capital flowing into the space Genesis’s $105 million seed, Physical Intelligence’s $400 million, Skild AI’s $4 billion valuation. That’s not speculative money chasing hype (okay, some of it is). Much of it is coming from investors with serious technical backgrounds who understand the domain.

At the same time, the AI foundation model paradigm that worked for language and images is now being applied to physical manipulation. We’re seeing improvements in simulation quality. Data collection costs are dropping. Computing power keeps getting cheaper.

And crucially, we’re seeing companies willing to take on the full-stack integration challenge rather than just working on the AI side. Genesis AI, Figure AI, 1X Technologies they’re all building both hardware and software in integrated systems.

This combination of factors money, talent, technical approaches, and willingness to tackle integration suggests we might genuinely be at an inflection point. Not the “robots are coming for all jobs tomorrow” inflection point that gets hyped in tech media. But a more realistic “robots can now handle tasks that were impossible five years ago, and that list is growing fast” inflection point.

If Genesis AI succeeds and that’s still a big if they won’t be the only ones doing this kind of work. They’ll be part of a wave that changes what robots can do and where they can operate.

What Comes Next: Timeline and Expectations

Genesis says they’ll reveal their first general-purpose robot “soon.” They’re currently in talks with potential customers. They’re hiring across three continents. They’re planning to raise more capital (though they’ve ruled out going public anytime soon).

Zhou Xian told TechCrunch their goal remains unchanged: “Our goal is to build the most capable robotic system.”

Translation: they’re not rushing a product to market just to show revenue. They’re continuing to develop the technology until it’s genuinely capable enough to deliver on the “human-level manipulation” promise.

That’s admirable from a technical standpoint. From a business standpoint, it means they’ll burn through that $105 million seed funding before generating meaningful revenue. Which means they’ll need to raise again. Which means they’ll need to show continued progress in demos and partnerships to maintain investor confidence.

The cycle of startup life: raise money, build technology, show progress, raise more money, build more technology, eventually ship a product, iterate based on feedback, scale. Genesis is currently somewhere between the “show progress” and “ship a product” phases.

Bottom Line: Cautious Optimism Warranted

Here’s my take after digging through all this: Genesis AI is doing genuinely impressive work, backed by serious people, attacking real problems with a thoughtful technical approach.

The full-stack strategy makes sense. The embodiment gap is a real issue, and building human-like hands to solve it is logical. The data collection glove is clever economics. The simulation approach could genuinely accelerate development.

But and this is important they haven’t shipped anything yet. The demos are impressive but controlled. The economic model is unproven. The ethical questions around job displacement are unresolved. The path from “impressive lab results” to “reliable commercial product” is littered with failed robotics companies.

So where does that leave us?

Watch this space. Genesis is worth paying attention to. If you’re in manufacturing, pharma, food service, or any industry involving complex physical manipulation, stay informed about their progress. If they succeed, they won’t be the only ones offering this capability, but they might be first.

If you’re a worker in those industries, start thinking about how automation might change your role. Not in a panic “my job is disappearing tomorrow” way, but in a strategic “what skills will be valuable when robots can handle routine manipulation tasks” way. The answer is probably things like judgment, creativity, complex problem-solving, and coordination the things humans are still dramatically better at.

And if you’re an investor, researcher, or founder in the robotics space, Genesis just raised the bar for what “impressive” looks like in 2026. The combination of AI brain, custom hardware, clever data collection, and powerful simulation isn’t just a cool demo it might be the template for how full-stack robotics companies operate going forward.

The age of truly dexterous robots isn’t here yet. But Genesis AI just made it look a lot closer than it did yesterday.

And that’s worth paying attention to.

Leave a Reply